E-commerce

March 25, 2026

Your rankings seem stable, but organic sessions are struggling to turn into revenue. Often, the problem is not only “the right keyword”: it is the quality of experience on landing pages. A performance SEO audit differs from a general SEO audit: it measures the speed, visual stability, and responsiveness perceived by visitors, connects these signals to your templates (home, collection, product page), and produces a prioritized action plan. This guide draws on the documentation Google Search Central on Core Web Vitals, the web.dev guides and Interaction to Next Paint (INP), the Chrome UX Report, PageSpeed Insights, Shopify best practices for themes, the Shopify SEO handbook and the e-commerce SEO best practices post (Shopify). For the broader audit method (crawlability, indexing, content), refer to the e-commerce SEO audit guide: here, the focus is deliberately on perceived performance and how to manage it.

“Core Web Vitals are a set of metrics that measure the real loading, interactivity, and visual stability of the page.”

Google Search Central, Core Web Vitals documentation (free translation)

Summary

SEO performance audit: definition and scope

A performance SEO audit is a targeted analysis of useful loading speed, responsiveness to interactions, and layout stability. It complements, without replacing, the review of keywords, internal linking, and indexing. The goal is twofold: improve what users experience (less abandonment, better conversion) and align the store with Google’s expectations around Core Web Vitals, presented as signals related to experience in search results.

A typical scope includes: selecting model URLs by page type, collecting field metrics when possible (reports from the Chrome UX Report), comparing them with lab measurements via PageSpeed Insights, identifying heavy or blocking resources, third-party scripts, unoptimized images, and debt related to the theme or applications. It is not just a race for scores: a good audit links each metric to a plausible cause and to a development or content task. It complements standard crawling and indexing monitoring without replacing it: both views remain necessary to prioritize budget and development.

Why page experience matters for SEO

Google insists that ranking rests on many signals; experience metrics are not the only lever. On the other hand, a slow or unstable store weakens usage: visitors leave before reaching the cart, which indirectly affects your business indicators and the quality of behavioral signals. Google's Basic Requirements also remind us that manipulative techniques must be avoided: optimizing performance in the service of the user remains consistent with a sustainable strategy.

On the editorial side, always cross technical performance with the guide on creating useful content: a fast page that does not meet search intent will not «save» your SEO. The performance audit must therefore be read together with your brand-stage SEO strategy and with the e-commerce SEO guide.

The three Core Web Vitals: what you’re really measuring

The three main metrics are described in detail on web.dev. In operational terms for an e-commerce site:

Metric | Question asked | Merchant interpretation angle |

|---|---|---|

LCP (Largest Contentful Paint) | When is the main visible content rendered? | Hero image, banner, product media above the fold: check size, loading priority, and formats. |

INP (Interaction to Next Paint) | Does the page respond quickly after an interaction? | Add to cart, collection filters, search: latency may come from the main JavaScript or third-party scripts. |

CLS (Cumulative Layout Shift) | Does the layout shift while loading? | Ads, embedded reviews, web fonts: instability = accidental clicks and frustration at checkout. |

INP has taken center stage in discussions about interactivity: the web.dev documentation on INP explains how this metric differs from older approaches and why it better reflects the real experience. For your audit, note the critical interactions in your purchase journey and test them on mobile, not just on desktop.

Real data, lab and representative samples

A common mistake is to optimize for the PageSpeed Insights score on a single URL, a single device, and a single moment. Aggregated field data (such as that exposed via CrUX) reflects varied network and hardware conditions: it can differ greatly from a lab test. For a useful audit:

Choose representative URLs: homepage, a high-traffic collection, a "typical" product page, a blog article page that captures organic traffic.

Compare mobile and desktop: e-commerce traffic is often mostly mobile; bottlenecks differ.

Document the context: active theme, list of apps, presence of a consent banner, A/B tests, marketing preloading.

Avoid drawing conclusions too quickly: an "average" metric over a short period can hide spikes after a deployment or a campaign.

The Chrome UX Report describes how this aggregated data is built: useful for understanding what you see in the tools that draw on it.

Tools and reports: correspondence table

Need | Tool or source | Role in the audit |

|---|---|---|

Summary view by URL (lab + field when available) | PageSpeed Insights | Identify the listed opportunities (images, scripts, fonts) and the Core Web Vitals metrics. |

Understanding metrics and thresholds | web.dev Vitals | Train the team on LCP, INP, CLS, and associated best practices. |

Google Search guidance on CWV | Google Search Central | Align internal SEO messaging with the official definitions used in the search context. |

Shopify theme and storefront performance | Theme performance (shopify.dev) | Prioritize projects compatible with the Liquid ecosystem, sections, and apps. |

Basic SEO settings (titles, descriptions) | Shopify SEO manual | Make sure the "content and tags" layer remains consistent after technical optimizations. |

If you use Google Search Console on the domain property, the reports related to page experience and Core Web Vitals (when they are available for your URLs) provide a view by page group: cross-reference them with your analytics performance exports to identify the site sections where the degradation costs the most in sessions or revenue.

Audit checklist by e-commerce template

Each template has its own constraints. Use this grid as a working checklist, not as an exhaustive list: adapt it to your catalog and your markets.

Template | Performance checkpoints | SEO / UX risks |

|---|---|---|

Home | Carousel or hero media, partner logos, personalization scripts | High LCP if the main media is heavy; CLS if the carousel injects variable heights. |

Collection / category | Filters, sorting, pagination, image grids | INP if filtering reloads too much JavaScript; LCP on the first row of products. |

Product page | Gallery, zoom, third-party reviews, recommendations | Review or recommendation scripts that delay interaction; CLS if the price or buy button shifts. |

Cart / checkout | Trust badges, upsell, shipping calculator | Each module adds potential blocking time; prioritize simplicity at these steps. |

Blog / guide | Video embeds, table of contents, CTA callouts | Long-form content: watch out for iframes and ads that increase CLS. |

Document for each line: tested URL, device, metrics before intervention, cause hypothesis, owner (dev, marketing, agency). This rigor avoids the « optimizations » that are never validated in production.

Common causes on Shopify and the storefront

Shopify provides a global infrastructure and content delivery mechanisms; perceived performance still depends heavily on your theme, your applications, and third-party code added manually (pixels, tag managers). The theme performance documentation emphasizes development best practices: limit browser work, load JavaScript reasonably, and optimize images. For general SEO, Shopify's post on e-commerce SEO connects speed, user experience, and catalog quality: useful for framing discussions with merchandising teams.

Applications: each installed app can add scripts, network requests, or render-blocking. Inventory them and question their real usefulness.

Pixels and tracking: marketing needs data, but too many tags degrade INP. See how to structure tracking in our article on the web pixels and insights.

Product images: formats, displayed sizes, lazy loading: these are often the first gains on PDP LCP.

Fonts and icons: poorly configured web font loading can delay text rendering and cause shifts.

Prioritize: user impact, technical effort, SEO risk

Not all optimizations are equal. Use a simple matrix:

Quadrant | Examples | Decision |

|---|---|---|

High UX impact, moderate effort | Key image compression, disabling an unused script, fixing a CLS on the add-to-cart button | Plan in a short sprint |

High impact, high effort | Partial theme redesign, replacing a core app | Project roadmap with regression testing |

Low impact, low effort | Minor configuration tweaks | Backlog |

Observed low impact, high effort | Aesthetic redesign without measurable gain on the metrics | Defer or require a business case |

Do not promise quantified ranking gains: the public documentation describes experience signals, not a detailed URL-by-URL ranking scale. Instead, measure the effect on conversion rate, session duration, or revenue per session on the corrected pages.

Deliverable: structure of a performance audit report

A useful report contains at minimum:

Scope and method: tested URLs, tools, dates, environments (mobile, desktop, markets).

Quantified findings: LCP, INP, CLS values or equivalent available, with screenshots or exports.

Cause hypotheses: linked to named resources (files, scripts, apps) when verifiable.

Prioritized recommendations: owner, effort estimate, dependencies.

Validation plan: how to retest after go-live, at T+7 and T+30.

This structure extends the spirit of the SEO audit guide by zooming in on the performance layer.

Link with useful content, conversion and behavioral signals

Speed does not replace clarity: pages must answer intent, as the guidelines on helpful content remind us. After a performance audit, also check that product copy, policies, and FAQs are worthy of the click from the SERP. A visitor who lands on a relevant but slow page may leave; a visitor on a fast page that does not answer anything may leave too. So combine technical review and editorial review.

Behavioral signals (bounce rate, session depth) should be interpreted with caution depending on your analytics, but a clear improvement in INP on the collection filter or in LCP on the product page often translates into less friction in the funnel. Avoid attributing SEO success to a single metric without a time series.

Control rhythm and regressions

Performance is a state, not a one-off project. After each major theme update, app installation, or campaign using new scripts, rerun a mini-check on your template URLs. Compare with the baseline recorded during the last full audit. Regressions are common when several teams touch the same storefront without coordination.

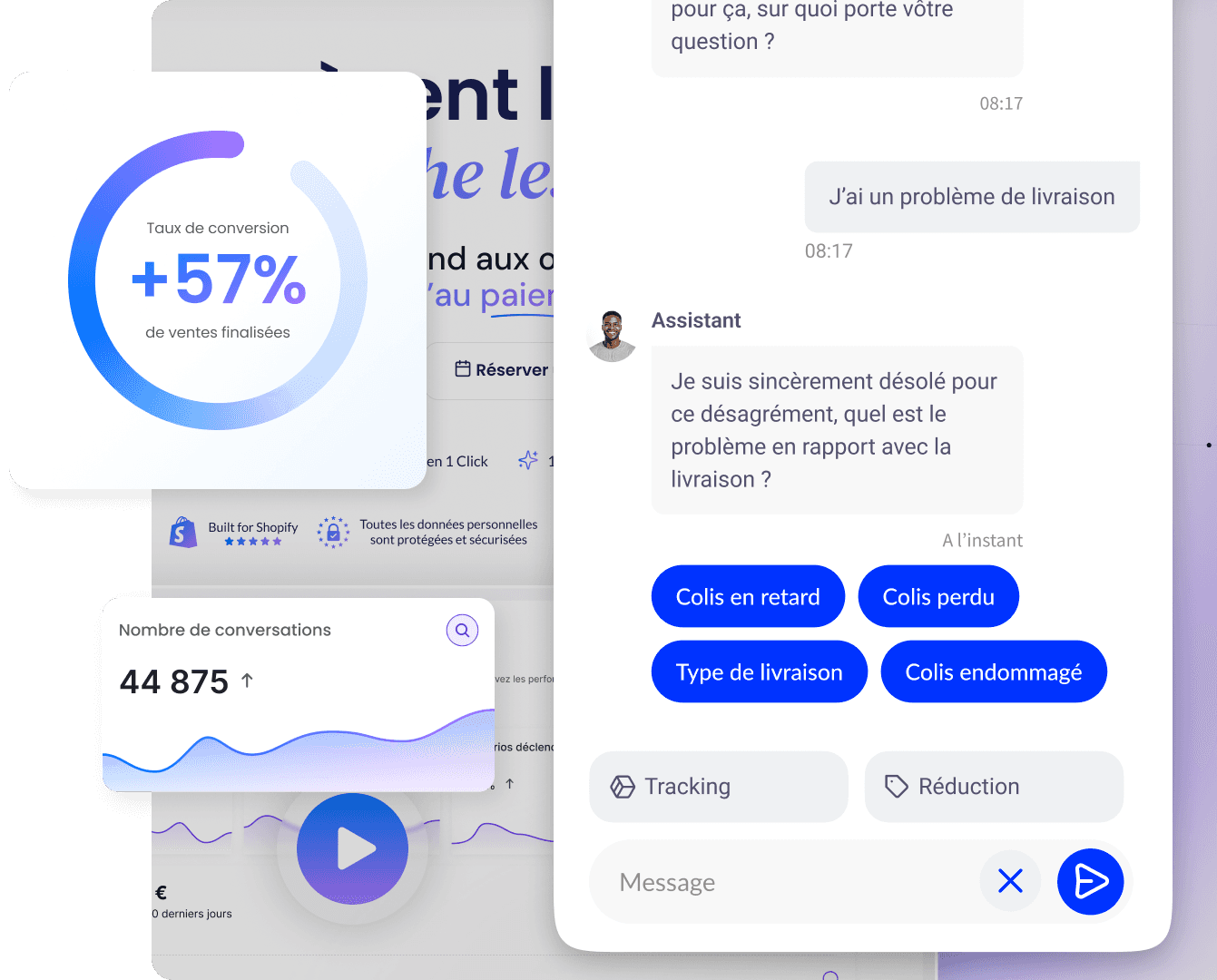

Supplement: onsite engagement and Qstomy

SEO brings qualified traffic; conversion still depends on the dialogue on the page. An assistant like Qstomy can answer product questions, guide users to the right variant, and reduce drop-offs on pages where information is lacking without weighing down JavaScript the way a poorly integrated widget overload would. Think of it as a complement to a healthy technical foundation: see the Shopify integration and the article e-commerce chatbot.

Summary

An SEO performance audit measures and improves LCP, INP and CLS on representative templates, by cross-referencing field and lab data, Google documentation and web.dev guides, Shopify best practices and the realities of the theme and apps. Prioritize by user impact and effort, deliver an actionable report and plan checks after each major change. Always pair this technical layer with a content strategy aligned with search intent.

FAQ

How is this audit different from a general SEO audit?

The general SEO audit also covers keywords, indexing, internal linking and content. The performance audit focuses on perceived speed, stability and responsiveness: Core Web Vitals metrics, scripts, media, theme and apps.

Is a good PageSpeed score enough?

No: the score summarizes tests; validate on critical business URLs and, if possible, with field data. User experience matters more than a single number.

My INP is bad on the collection page: where do I start?

List the interactions (filters, sorting, infinite scroll). Isolate third-party scripts, measure with the browser's developer tools, then test temporary disabling in a staging environment to confirm the cause.

Are Shopify apps always to blame?

Often, but not systematically: the theme and customizations matter just as much. Inventory and targeted tests make it possible to assign responsibility without generalizing.

Do Core Web Vitals guarantee a better ranking?

Google presents these metrics as experience signals among others. Improve them for users; do not present them internally as a promise of guaranteed ranking.

Should I audit only mobile?

No: audit both, but often prioritize mobile if it is your main channel. Thresholds and bottlenecks differ.

What should I do after delivering the report?

Turn each recommendation into a ticket with an owner and due date. Schedule a post-launch control measurement to confirm the effect on the metrics and, where applicable, on conversion.

Does the performance audit cover accessibility?

This guide does not replace an accessibility audit (RGAA, WCAG). Some issues overlap (for example readability or keyboard focus), but the scope here remains Core Web Vitals and perceived performance.

What if my store is headless?

The metrics remain valid, but the tech stack changes: custom frontend, API, CDN. Extend the audit scope to rendering servers and the JavaScript client that powers the storefront.

Go further

March 25, 2026