E-commerce

March 12, 2025

Would you like to improve your products and customer journey, but collection remains sporadic and the data ends up in files with no follow-up? User feedback is only valuable if the process is clearly defined, aligned with privacy, and looped back into action. According to Harvard Business Review, which notably summarizes work by Frederick Reichheld (Bain & Company), acquiring a new customer is often several times more expensive than retaining an existing one, depending on the industry, and a modest increase in retention rate can have a noticeable effect on profitability. Without a method, you mostly hear from the extremes (very satisfied or dissatisfied customers), as Shopify reminds us about feedback tools. Here are five steps to scale the approach without drowning in data.

Estimated reading time: 14 min

Summary

What is user feedback?

User feedback is any information a customer or visitor gives about their experience: satisfaction, friction, an idea, a complaint. The form varies: survey, public review, interview, chat message, tracked behavior (with consent). As soon as personal data are involved (email, purchase history), the GDPR framework applies: legal basis, information, retention period. The fact sheets from CNIL on legal bases help choose the appropriate basis (consent, contract, legitimate interest, etc.).

For the strategic vision before the operational side, also read the 5 steps for an effective feedback strategy.

Why feedback programs fail

A setup that looks good on paper can remain inactive if no one makes decisions, if the tools do not talk to each other, or if management sees no link to revenue. Common patterns:

Absence of owner: each team sends a survey, with no consolidated view or prioritization.

Questions that are too long or too vague: the reader gives up, or the answers are not usable.

Listening bias: only public reviews or support tickets are read, while other segments (silent buyers, abandoned carts) remain invisible.

No closing loop: customers do not know whether their comment was used; they stop responding.

Salesforce documentation on consumer reports (Connected Customer and related work) emphasizes the idea that experience is a continuous signal: your implementation must therefore be a process, not an event.

Collection channels: strengths and limitations

A good channel is not chosen at random: it depends on the type of question (attitude vs behavior), volume, and risk. Nielsen Norman Group reminds us that it is rare to be able to deploy all methods on the same project, but that most projects benefit from combining several approaches and cross-referencing insights. Here is a reading grid (to adapt to your sector):

Channel | Typical strength | Limitations | When to use it |

|---|---|---|---|

Post-purchase email | Fresh context, known list | Fatigue if too frequent | Overall CSAT, NPS on customer base |

In-app or store widget | Specific friction point | Risk of interrupting the journey | Checkout friction, search |

Support and tickets | Rich verbatims, real reasons | Bias toward problems | Product prioritization, FAQ |

Public reviews | Visibility, SEO | Extremes, moderation | Reputation, quality signals |

Interviews | Depth, nuance | Cost, time, sample | New offer, repositioning |

Analytics and sessions | “Real” behavior | Interpretation, consent | UX prioritization, journey |

“Qualitative methods answer questions of the ‘why’ or ‘how to fix it’ type well, whereas quantitative methods answer the questions ‘how much’ and ‘to what extent’ better.”

Nielsen Norman Group, When to Use Which UX Research Methods

This distinction guides your tool stack: a score alone is not enough without a few verbatims or targeted observations.

Step 1: Define the objectives

Without a measurable objective, you will choose neither the right channel nor the right questions. Specify what you are testing: purchase journey, perceived quality, support, delivery. The usual indicators include NPS, CSAT, CES: Shopify's guide to NPS explains the value and limits of a single score. Set a baseline (current measurement) and then a target over 3 to 6 months, for example “reduce size-related tickets by 20%” rather than “improve service”.

SMART framework and milestones

Formulate specific objectives, with a verifiable success indicator (rate, time, volume). Decide who validates the baseline (finance, ops, product) and how often you review the target: a quarter is often enough at the beginning to avoid drift in the questions. Also document what you are not measuring yet: this prevents hasty interpretations.

Examples of measurable objectives

“Achieve an NPS above X over 12 months” (X defined after your first internal measurement)

“Reduce support tickets about clothing sizes by 20%”

“Identify the 3 main friction points at checkout by Q2”

“Measure delivery satisfaction (CSAT) by carrier”

International surveys on customer service behavior (Statista) show how much the service experience influences loyalty: your objectives must remain consistent with this requirement.

Step 2: Choose data collection methods

Surveys and questionnaires: closed scales + short open-ended questions. Common tools: Google Forms, SurveyMonkey, Typeform. Think about the channel (email, SMS, in-app): response rates vary greatly depending on context and length.

Interviews and groups: depth for complex topics (new offer, repositioning), with an interview guide and a representative sample.

Behavioral analysis: analytics, heatmaps, session recordings (with consent). Your web pixels enrich the quantitative view.

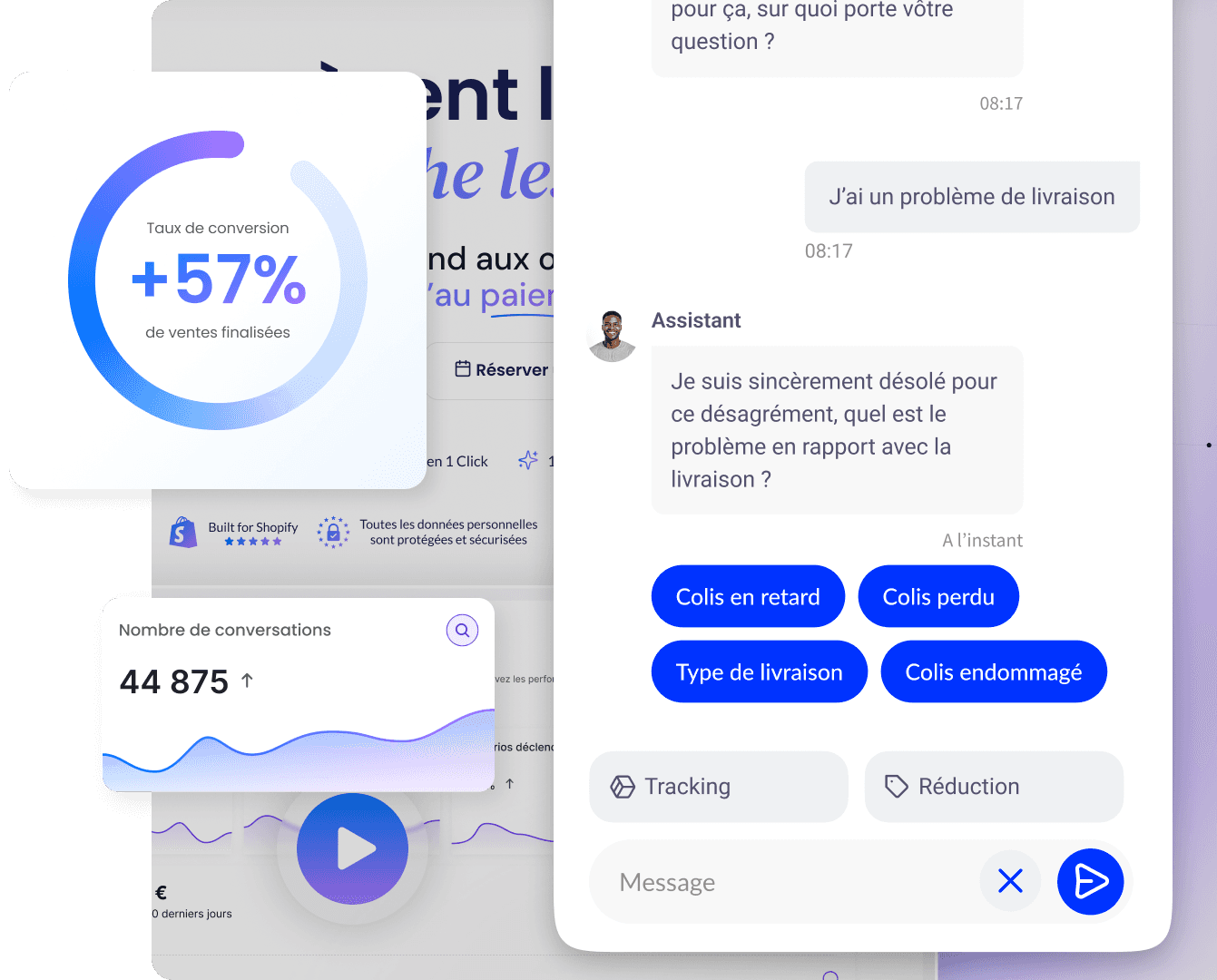

AI chatbot: contextual questions after an action or an error, categorization of reasons. See the e-commerce chatbot guide.

To compare methods, our article on the 5 best collection methods details advantages and limitations.

Criteria for choosing a tool (or a stack)

Before subscribing to a new platform, test a small scope: one product, one language, one segment. The table below summarizes common decision criteria (not exhaustive):

Criterion | Questions to ask yourself | Example of a good practice |

|---|---|---|

GDPR and hosting | Where are the responses stored? Who processes the data? | DPA, DPIA if the volume is sensitive |

Integrations | Shopify, helpdesk, CRM, BI | Avoid permanent manual exports |

Segmentation | By channel, country, cart | Avoid identical questionnaires for everyone |

Length | Target completion time | Prefer a few well-chosen questions |

Languages | Covered markets | Consistent translation and tone |

Step 3: Build the team

The quality of feedback also depends on how you listen to it. Train marketing, support, and product to:

ask neutral questions (“How would you describe…?” rather than “Did you love it?”);

welcome negative feedback without minimizing it, and offer a next step;

clarify who sends what to whom and by when (shared tool or CRM).

Customer experience issues and expectations around responsiveness are highlighted in recent benchmarks (Zendesk CX Trends): your team must be aligned on the same level of promise.

Prepare an internal guide: tone of public responses, response times, legal escalation if sensitive data is mentioned. Consistency matters as much as speed.

Governance: roles and cadence

Without governance, feedback gets lost between silos. A simple matrix (inspired by the “who does what” model) clarifies the value chain:

Topic | Owner | Contributors | Frequency |

|---|---|---|---|

Choice of questions and milestones | Product or CX | Marketing, support | Monthly at first |

Data quality and access | Ops or IT | Legal | Quarterly |

Communication to customers | Marketing | Product | With each major release |

Backlog prioritization | Product | Leadership | Biweekly |

The review cadence must remain realistic: a 30-minute meeting with a fixed agenda (new topics, decisions, open actions) often beats a monthly committee that is too long.

Step 4: Analyze and process

Centralize feedback (spreadsheets, helpdesk, knowledge base) then code the verbatims (recurring themes). Cross qualitative and quantitative data: prioritize with an impact / effort or impact / risk matrix. Possible tools: pivot tables, BI, or qualitative coding (NVivo, ATLAS.ti) for large volumes. To go further into thematic coding methods, Nielsen Norman Group's article on thematic analysis provides useful guidance for structuring qualitative corpora.

Method detailed in our guide feedback analysis in 5 steps.

Prioritization: impact, effort and risk

Before starting development, classify the topics:

Area | Example signal | Typical action |

|---|---|---|

High impact, low effort | Missing FAQ, misleading content | Content, micro-UX |

High impact, high effort | Logistics overhaul | Roadmap, budget |

Low impact, but legal risk | Mentions, consent | Legal priority |

Noise or duplicate | Isolated complaint | Monitor, do not overreact |

Step 5: Take action and follow up

Treat feedback like a roadmap: an owner per topic, delivery criteria, and communication to the relevant customers. High-performing organizations “close the loop”: they inform users of the changes made based on their remarks, which builds trust and future participation. That is the heart of an effective feedback loop. Without this step, collecting feedback discourages your most engaged customers.

Define KPIs before rollout: changes in NPS or CSAT, ticket volume, conversion rate on the corrected pages. Research on the value of loyalty (HBR) reminds us why this loop deserves a budget and product time.

Setup checklist

Written objectives and indicators

Chosen collection method(s), configured tool(s), up-to-date legal notices

Defined collection times (post-purchase, post-support, quarterly…)

Roles and escalation deadlines

Rule for prioritizing actions (matrix or backlog)

Communication plan “you told us, we did X”

Personal data and legal framework

As soon as you collect email addresses, segments, purchase history or ticket content, you must document the purpose, retention period and rights of individuals (access, rectification, objection as applicable). Explanations of the GDPR at the European level are available on the European Commission's data protection portal: useful for framing the general obligations and links with case law.

“Personal data must be: a) processed lawfully, fairly and transparently in relation to the data subject.”

Regulation (EU) 2016/679 (GDPR), article 5, paragraph 1, point a)

On the e-commerce side: update your privacy policy when you add a new survey tool, synchronize audiences to networks or import data into a CRM. See also the CNIL guidance on advertising targeting if you combine feedback and campaigns.

The benefits

Management literature and market data converge: retention and customer experience are drivers of profitability. Harvard Business Review cites Reichheld (Bain) on the effect of a limited increase in retention rate on profits. Surveys on customer service behavior (Statista) also show that a positive experience strengthens repurchase intention.

Decisions based on signals rather than intuition

Continuous product and content improvement

Early detection of irritants

Strengthening trust when customers see concrete follow-up

Best practices and mistakes to avoid

Best practices

Start simple: a well-calibrated post-purchase survey before layering on channels.

Shorten questionnaires: each additional question lowers completion; response rate benchmarks vary by channel and industry (Retently, SurveySparrow).

Choose the right time: after delivery for the overall experience, right after support for an incident.

Respond to reviews and criticism: a helpful, human response limits negative amplification on public channels.

Document decisions: a short internal log (topic, decision, date) avoids arguments without record.

Mistakes to avoid

Collect without an owner or prioritization

Ignore GDPR (purpose, retention, access rights)

Promise changes without following up internally

Over-interpret a small sample: supplement with other data before making an expensive pivot

Automate data collection with a chatbot

An assistant like Qstomy can capture questions and immediate feedback on the store, categorize them by theme, and relieve support from repetitive requests, as a complement to structured surveys. Shopify best practices on feedback tools emphasize automating collection and analysis to save time. See the AI chatbot integration on Shopify.

Summary

Implementing user feedback properly means: objectives (SMART, baseline), methods suited to the context, training for teams, clear governance, structured analysis, then visible actions that are measured. Cross HBR / Bain, Statista, Shopify, Zendesk, Nielsen Norman Group perspectives and the legal framework (CNIL, European Commission) with your on-the-ground reality. The checklist and prioritization tables help avoid operational and legal oversights.

FAQ

Which method should you choose first?

Often the post-purchase survey and behavioral analysis: they are quick to implement and reveal trends. Add the chatbot once the flows have stabilized.

How should you prioritize feedback?

Customer impact / implementation effort matrix, or weighted score if you have several criteria (legal risk, ticket volume, revenue at risk).

Can a chatbot replace surveys?

It complements them: spontaneity and context for it, representativeness and long-term series for well-targeted questionnaires.

How often should you collect feedback?

Continuously for weak signals (chat, reviews), and at fixed milestones for longer surveys (quarter, half-year).

How long for a first implementation?

Two to four weeks for a first loop (objectives, one channel, tracking dashboard, owner) is realistic in an e-commerce SME.

What response rate should you aim for?

It depends on the channel, length and segment; response-rate studies (Retently, SurveySparrow) provide ballpark figures, not a universal target. Measure your own baseline.

How can you reduce bias?

Vary the sources (behavior, verbatims, scores), avoid leading questions, and cross-check with a representative sample when possible. The Nielsen Norman Group reminds us of the value of combining multiple methods.

International: what should you watch?

Languages, carriers, claim handling times, and local requirements: the same questions can produce different averages depending on the market. Segment dashboards before comparing.

Go further

March 12, 2025