E-commerce

March 25, 2025

You collect customer feedback, but the comments pile up in spreadsheets, support tools, or inboxes without feeding a clear roadmap. The problem is often not a lack of data: it is the absence of method for turning heterogeneous phrases into prioritized decisions. This guide proposes five operational steps for e-commerce, drawing on recognized references: thematic analysis as described by the Nielsen Norman Group, the best practices for collecting and interpreting the Shopify blog on feedback tools, and a product-oriented reading of metrics (NPS, CSAT, ticket volume). You will find tables, safeguards, and references to our complementary articles on collection and the feedback loop.

Summary

What is feedback analysis?

Feedback analysis is the process that transforms raw feedback (surveys, tickets, public reviews, chat messages, internal notes) into actionable insights: recurring themes, priorities, cause hypotheses, and ideas for product or journey improvements. Without analysis, you have opinions; with a method, you can align product, marketing, and support teams around traceable decisions. The quality of insights depends above all on the quality of the collection and the context: the same word can refer to different situations depending on the channel (post-purchase, complaint, simple curiosity).

To set the stage, make sure you have a collection strategy that is consistent with your objectives. Analysis does not “fix” biased collection: it only makes it readable.

Why structure the analysis: rich data and bias

The Shopify blog reminds us of a common pitfall: if you only wait for customers to come to you, you mostly hear the extremes (very satisfied or very dissatisfied) and you risk misrepresenting the views of the “middle” of your customer base. Hence the value of tools and processes that make it possible to broaden representativeness, organize responses, and identify patterns in the data to measure satisfaction and drive improvements.

“No matter what you think of your service: what matters is what your customers think.”

Shopify, article on customer feedback tools (free translation from English)

This principle applies to e-commerce as well as SaaS: measurement must reflect the reality experienced by buyers, not just internal conviction. A structured analysis also helps avoid two biases: recency bias (the latest complaint outweighs the trend) and anchoring bias (interpreting feedback to confirm what we already believed).

Step 1: Gather actionable feedback

Effective data collection combines several moments and formats: post-purchase surveys, a short survey after a resolved ticket, a question on the product page or checkout, session recordings on friction pages. The goal is not to multiply long questionnaires: it is better to ask a few well-posed questions at relevant moments than to have an exhaustive survey that no one completes.

Useful moments in e-commerce

After delivery: ask whether the experience matched expectations (product, packaging, delivery times).

After support: CSAT or an open-ended question about the problem resolution.

On the product page: « Did you find the size / compatibility information? »

Cart abandonment: a single question with limited choices can be enough to understand the main friction.

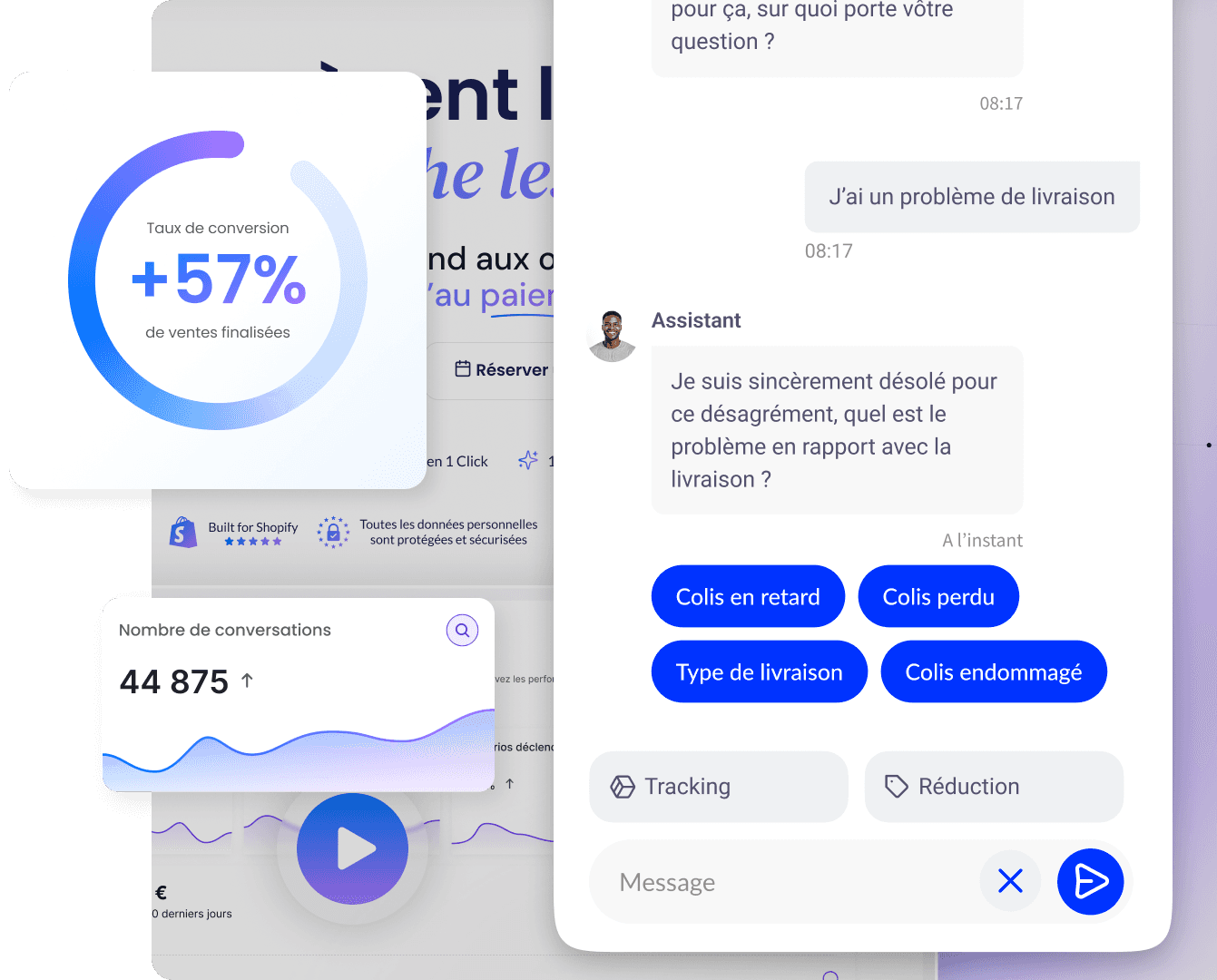

An e-commerce chatbot can ask these questions in the flow, with a low cognitive cost for the user. Think about indicating how you will use the responses: transparency often increases participation.

Step 2: Centralize, clean, categorize

Before any analysis, gather the sources in a single repository (shared spreadsheet, ticketing tool, feedback platform, or data warehouse). Standardize the fields: date, channel, segment (new customer, recurring), product or collection, sentiment if you are doing classification, and raw text. Remove obvious duplicates and off-topic messages (spam, form errors).

Source | What it captures well | Watch out |

|---|---|---|

Structured surveys | Trends, scores (NPS, CSAT), comparisons over time | Question wording, response fatigue |

Support tickets | Real pain points, specific blockers | Overrepresentation of “visible” issues |

Public reviews | Social proof, recurring themes | Extreme bias, variable volume |

Behavior (analytics, heatmaps) | Where the journey gets blocked | Does not always say “why” without verbatim |

This step feeds your feedback loop: without stable categories, you won't be able to compare period N to period N+1.

Thematic analysis: from text to themes

For verbatim quotes and open-ended comments, the most proven approach in user research is thematic analysis: you label text segments with codes, then group the codes into themes when similar elements recur repeatedly. The Nielsen Norman Group defines this approach as a systematic method for organizing rich qualitative data and bringing out meaningful themes.

“Thematic analysis is a systematic method for breaking down and organizing rich qualitative data by labeling individual observations and quotes with appropriate codes, in order to facilitate the discovery of meaningful themes.”

Nielsen Norman Group, How to Analyze Qualitative Data from UX Research: Thematic Analysis (free translation)

A theme describes a belief, a need, or an observable phenomenon in the data; it emerges when related elements appear several times, across customers or different channels. In e-commerce practice, your codes can be “shipping,” “size,” “payment,” “customer support,” then refined (“promised vs actual lead time,” “missing payment methods”).

Common challenges (and how to avoid them)

The Nielsen Norman Group highlights several pitfalls: time-consuming data volume, superficial analysis limited to memorable quotes, difficulty separating the useful from the superfluous, contradictory data, or analysis that simply paraphrases without reasoning. Without a process, you fall back into improvisation. Hence the value of tools (spreadsheets, coding software) or team refinement workshops to converge on a list of themes.

Challenge | Consequence | Pragmatic response |

|---|---|---|

Too much raw data | Fatigue, forgetting important passages | Stratified sampling by channel and priority |

Superficial analysis | Focus on “striking” phrases | Full reading in batches, shared codes |

Contradictions | Uncertainty about the decision | Separate the segments (e.g. mobile vs desktop) |

Description without synthesis | Report unusable for teams | Rephrase as “insight + implication” |

Validate themes and clarify codes

Nielsen Norman Group’s documentation on thematic analysis recommends subjecting themes to scrutiny: a theme must be well supported by the data, with enough instances to be useful, and it is relevant to involve other people who have read the data to limit interpretation bias. In an e-commerce context, have support and product review the synthesis before freezing a roadmap: you will quickly spot overly optimistic or overly defensive readings.

Also distinguish between descriptive codes (what the customer says) and interpretive codes (your reading of the underlying problem). This distinction, described in the same article, avoids mixing quote and diagnosis when several contributors code the same verbatims. Finally, plan realistic analysis time: for rich data, Nielsen Norman Group indicates that it is often relevant to budget at least as much time for analysis as for collection.

Step 3: Extract verifiable insights

An actionable insight connects an observation to a cause hypothesis and an action path. Avoid unsupported generalizations: replace « customers don't like delivery » with « several independent feedback items mention a gap between the promised delivery time and carrier tracking on line X ». Cross qualitative with quantitative: approximate tag frequency, changes in ticket volume for a given issue, correlation with an abandonment rate at a step in the funnel.

Examples of useful formulations (illustrative)

Observation + action: « Repeated questions about sizes across three channels »: enrich the size guide and visuals on the relevant product pages.

Observation + action: « Friction at the checkout step on mobile »: targeted UX audit and testing on real devices.

Observation + action: « Post-support dissatisfaction about first response time »: adjust staffing or waiting messages, not just the script.

The goal is not to achieve absolute statistical certainty at the outset: it is to produce testable, prioritized hypotheses. For the structured implementation of changes, see also the 5 steps to implement feedback.

Step 4: Prioritize and plan actions

Prioritize using an impact grid (on satisfaction, revenue, support costs) and feasibility (technical effort, dependencies, risks). High-impact, low-effort “quick wins” should come first: they demonstrate the value of the approach and help fund attention given to larger projects.

Quadrant | Interpretation | Typical example |

|---|---|---|

High impact, high feasibility | Immediate priority | Fix an incorrect FAQ, add a missing icon |

High impact, low feasibility | Structured roadmap | Redesigning the checkout flow |

Low impact, high feasibility | Set of small improvements | Microcopy, internal links |

Low impact, low feasibility | Often deferred | Isolated, hard-to-replicate requests |

Assign a owner per action, a realistic deadline and a measurable success criterion (drop in the number of tickets for a given issue, improvement in a score, lower abandonment rate at a step). Document decisions to avoid reopening the same debates each quarter.

Step 5: Measure the impact and close the loop

After deployment, reschedule a targeted collection or monitor follow-up indicators: changes in NPS or CSAT for the relevant scope, tickets with the same code, recent reviews. Compare over windows that are consistent with your seasonality (sales, holidays, launches). Shopify notes that the right tools make it possible to establish baselines of satisfaction and track improvement over time, including through metrics such as CSAT, NPS, or CES when they are used consistently.

Indicators to track (depending on your maturity)

NPS: useful for the overall trend if the collection method remains constant.

CSAT: by channel (support, delivery) to isolate causes.

Volume and reasons for tickets: reduction of a theme after a fix.

Drop-off rate by step: before / after UX change.

Without feedback loops, customers do not see the effect of their feedback: communicate when a major improvement solves a problem often cited (newsletter, banner, response to reviews).

Roles and governance

A useful analysis is a team effort. Marketing can own the synthesis of surveys, support the quality of tickets, and product the prioritization of roadmaps. Set up an aggregation meeting (monthly or bimonthly) and a single output format: one page « monthly themes », three decisions made, three follow-ups. Limit the circulation of unversioned files: a single source of truth for themes and actions.

Role | Typical contribution |

|---|---|

Feedback owner | Framework, data quality, review schedule |

Support / CX | Ticket categorization, concrete examples |

Product / Ops | Prioritization, feasibility, testing |

Marketing | Customer communication, message testing |

What you gain with regular analysis

Alignment: fewer debates based on isolated anecdotes.

Learning speed: mistakes are corrected before they become reputational crises.

Support efficiency: reduction in repetitive requests when the root cause is addressed.

Customer trust: buyers see that their feedback matters when you close the loop honestly.

Case studies published by tool vendors can illustrate spectacular gains: above all, remember the logic (measurement, prioritization, iteration) rather than percentages copied out of context.

Best practices and mistakes to avoid

Best practices

Cross-check multiple sources before concluding (survey + ticket + analytics).

Share summaries internally, not just raw tables.

Document the sample's limitations (« strong testimony but few occurrences »).

Common mistakes

Collecting without analyzing: dormant data improves nothing.

Over-interpreting a small volume: use small samples to generate hypotheses, not laws.

Changing the questionnaire every month: you lose comparability.

Automating collection with Qstomy

An AI chatbot like Qstomy can ask short questions at the right moment in the journey, classify intents, and feed your analytics base with less friction than a static form. Automation does not replace human review of sensitive themes: it reduces the marginal cost of an ongoing customer voice. Discover the AI chatbot integration on Shopify.

Summary

Analyzing feedback means first avoiding extreme bias and organizing sources; then coding and grouping verbatims into themes, as recommended by Nielsen Norman Group's work on thematic analysis; finally prioritizing by impact and feasibility, measuring, and communicating. The five steps (collection, centralization, analysis, action, measurement) form a loop: without the last one, you never validate whether your changes were useful.

FAQ

What tools should you use to analyze feedback?

Spreadsheets and BI tools for aggregation; ticketing software for support; survey solutions for quantitative data. Qualitative coding software exists for large volumes; many SMBs succeed with a disciplined spreadsheet and a shared tag grid. See also the categories described by Shopify: surveys, polls, user testing, sentiment analysis, reviews.

How do you prioritize without doing everything at once?

Use the impact / feasibility matrix and limit the number of initiatives per sprint. A small number of well-tracked actions is better than a long list with no owner.

How often should you analyze?

Continuously for alerts (ticket spikes, clustered negative reviews); on a fixed cadence (monthly or bimonthly) for trends and comparison over time.

How much feedback is needed to draw conclusions?

It depends on the decision risk: a copy hypothesis can be tested quickly; a returns policy change requires more perspective and representative volumes. Don't set a universal magic threshold: instead document your margin of uncertainty.

Does AI replace the analyst?

It helps tag, summarize, and group; business validation and context understanding remain human, especially for sensitive or legal topics.

Is NPS enough?

It's a useful relationship indicator in trend analysis, rarely sufficient on its own: supplement with contextual questions (post-support CSAT, reasons for abandonment) and verbatims.

Learn more

March 25, 2025